Bio

Mission

Projects

Digital Forest at Being Human Festival, Bath 2024

About...

An interactive projected metaphor of the autumn forest, which took photographs and sound recordings from event attendeees mobile phones, walked them through modifying them with free media tools and added each as a leaf to the digital forest. The fallen media leaves activate when disturbed playing the sound and highlighting the images. The prototype can be played here

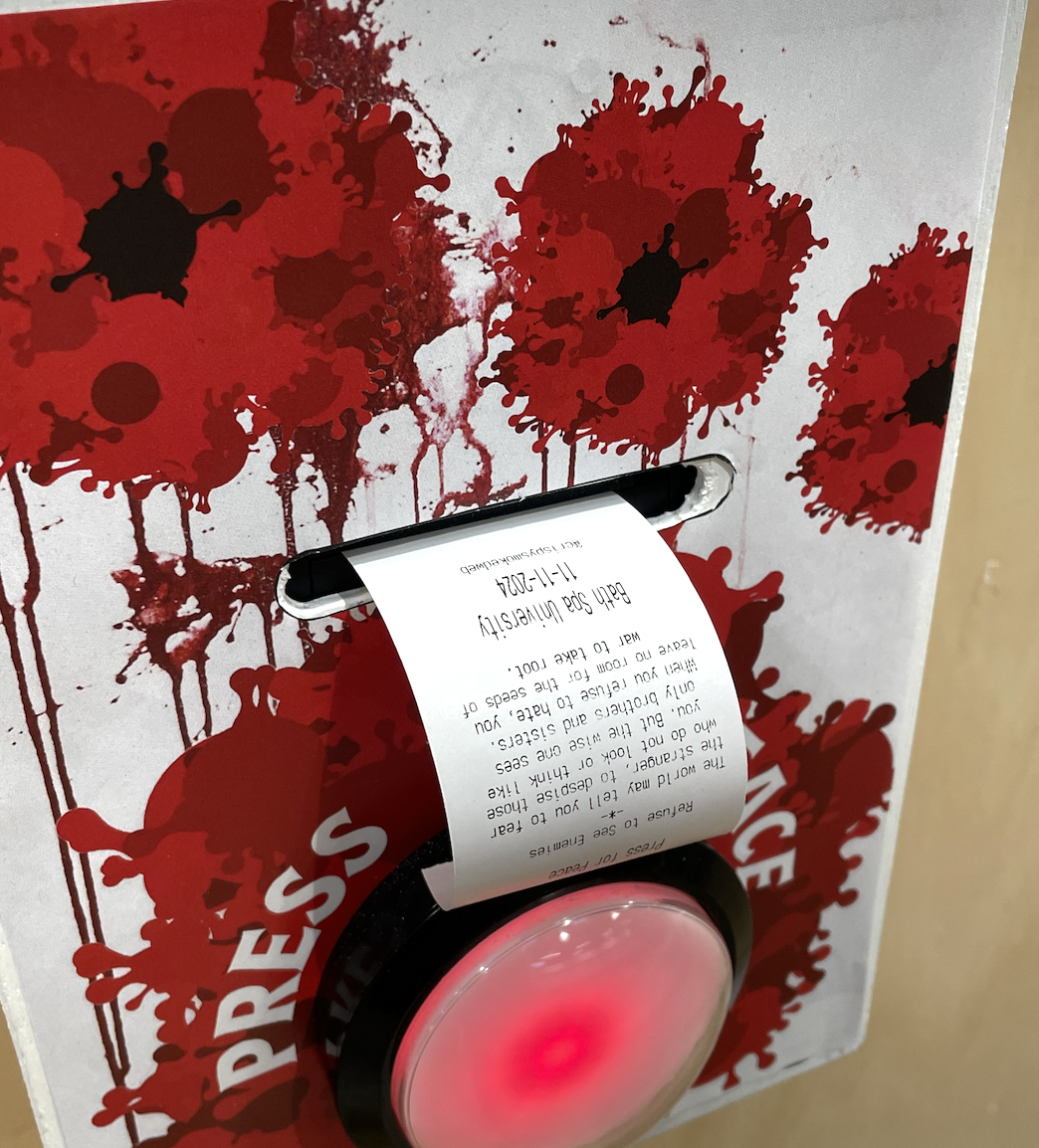

Press for Peace, peace activism bot

About...

BRAT night at the EMERGE showcase 2024, with Nigel Fryatt

About...

Nigel Fryatt of Polymathic hosted a live coded visuals event as part of EMERGE‘s end of residency showcase, where Nigel, myself and new resident Charlotte Piper brought our own coding and visual approach to accompany DJ sets for the evening.

I enjoyed refining the use of MIDI controllers with my code for a more embodied way of controlling the behaviour, and gave over control to some audience members to play with.

Data Dealers 2 and Fulmilment Centre at Shangri-La, Glastonbury Festival 2024

About...

The Creative Computing team were invited back to ShangriLa to create interactive, alternative narratives around consumption, technology, data and automation. Data Dealers (playfully and theatrically) captured visitors’ data and sold it to feed their own insatiable need to consume. The fulfilment centre presented a critical take on wellness as an industry that exploits identity and insecurity. Collaborators included Coral Manton, Naomi Smyth, Nigel Fryatt, Sam Sturtivant and Sam Kaighin. For an interesting write up see this article from Tom May

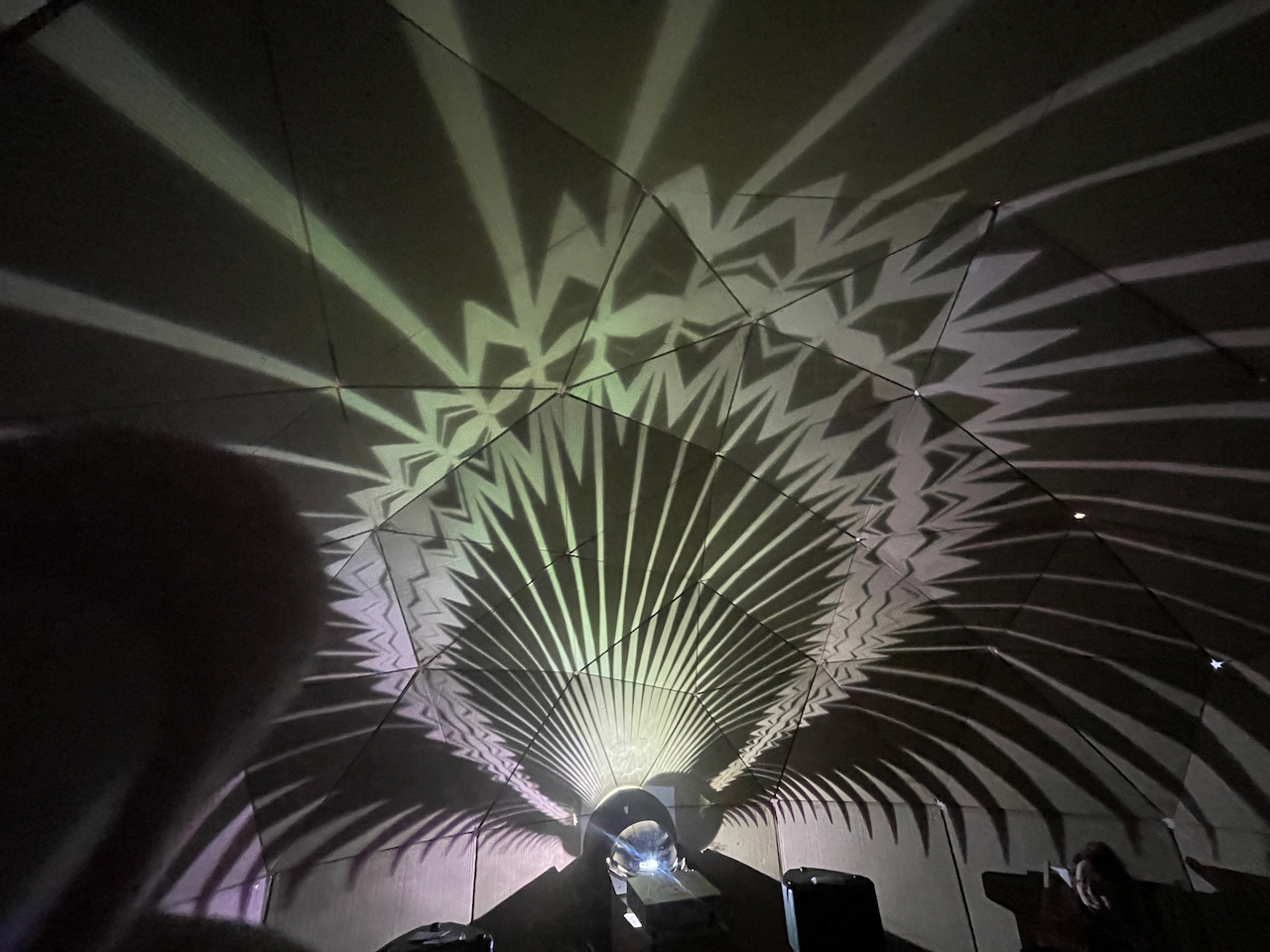

Alt_wilderness (DIY cardboard projection dome) at Fringe Arts Bath, with Nigel Fryatt and EMERGE

About...

Alt-Wilderness was a pop-up 5meter cardboard projection dome, constructed on site, and used to host a curated selection of art for Fringe Arts Bath in May 2024. The brain child of Nigel Fryatt @datarav3, and using the collective skills and muscle of residents in the EMERGE programme, the dome provided a venue for images, film, original music and a party or two. The build is documented here.

Beyond Earth with Angel Greenham and James Thornton

About...

For Beyond Earth, Angel Greenham took infrasound recordings taken from the stratosphere, processed by James Thornton, and produced a variety of visualisations in some of her signature painted monochrome motifs. I used code in p5.js to create a realtime visualiser of the audio leaning heavily into Angel’s motifs, to project inside the Alt-Wilderness dome at Fringe Arts Bath.

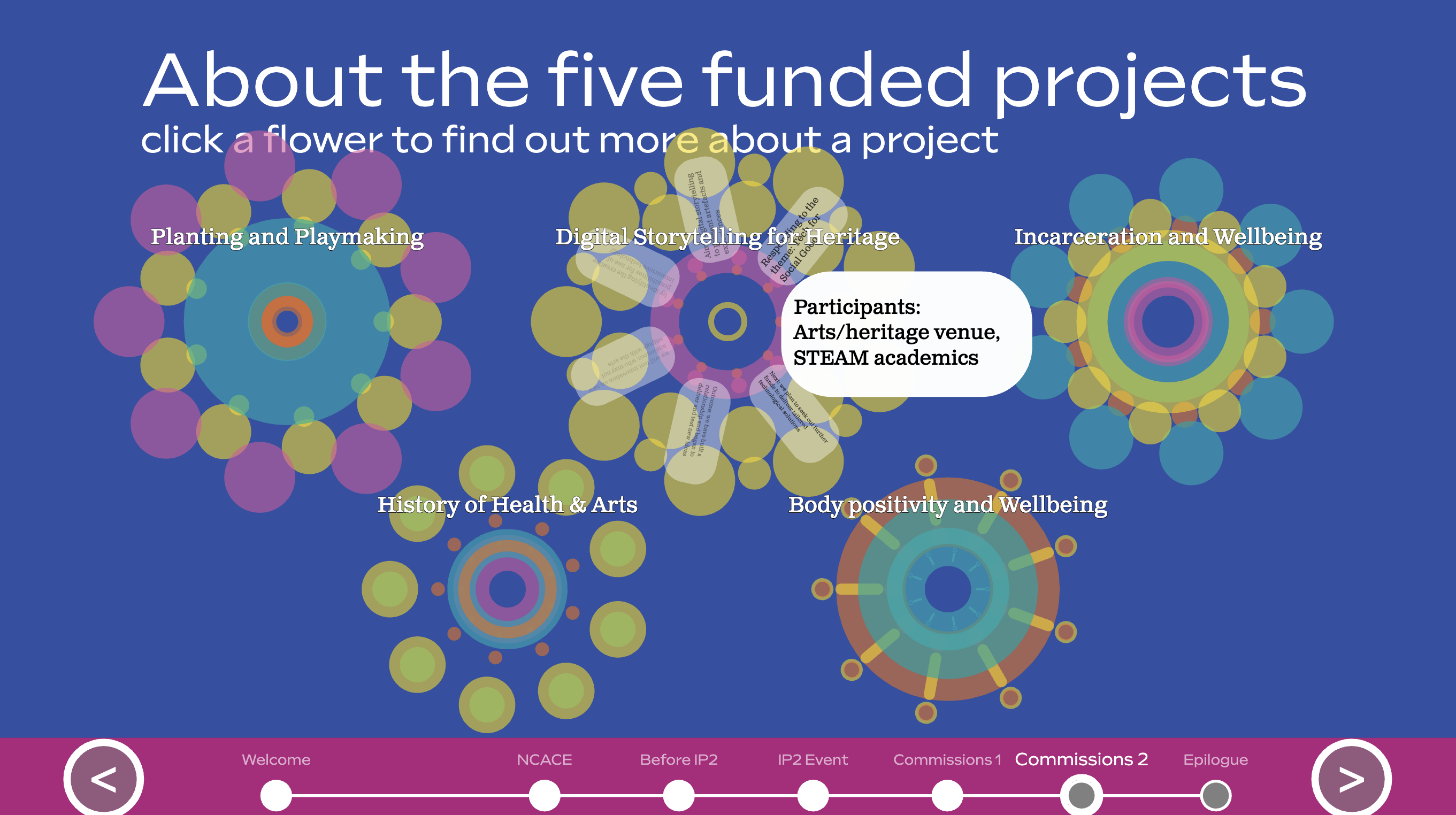

Interactive Story for NCACE

About...

Wood Wide Web for Kew, Wakehurst

About...

Data Dealers Emporium at Glastonbury Festival

About...

16×16

at Dareshack

About...

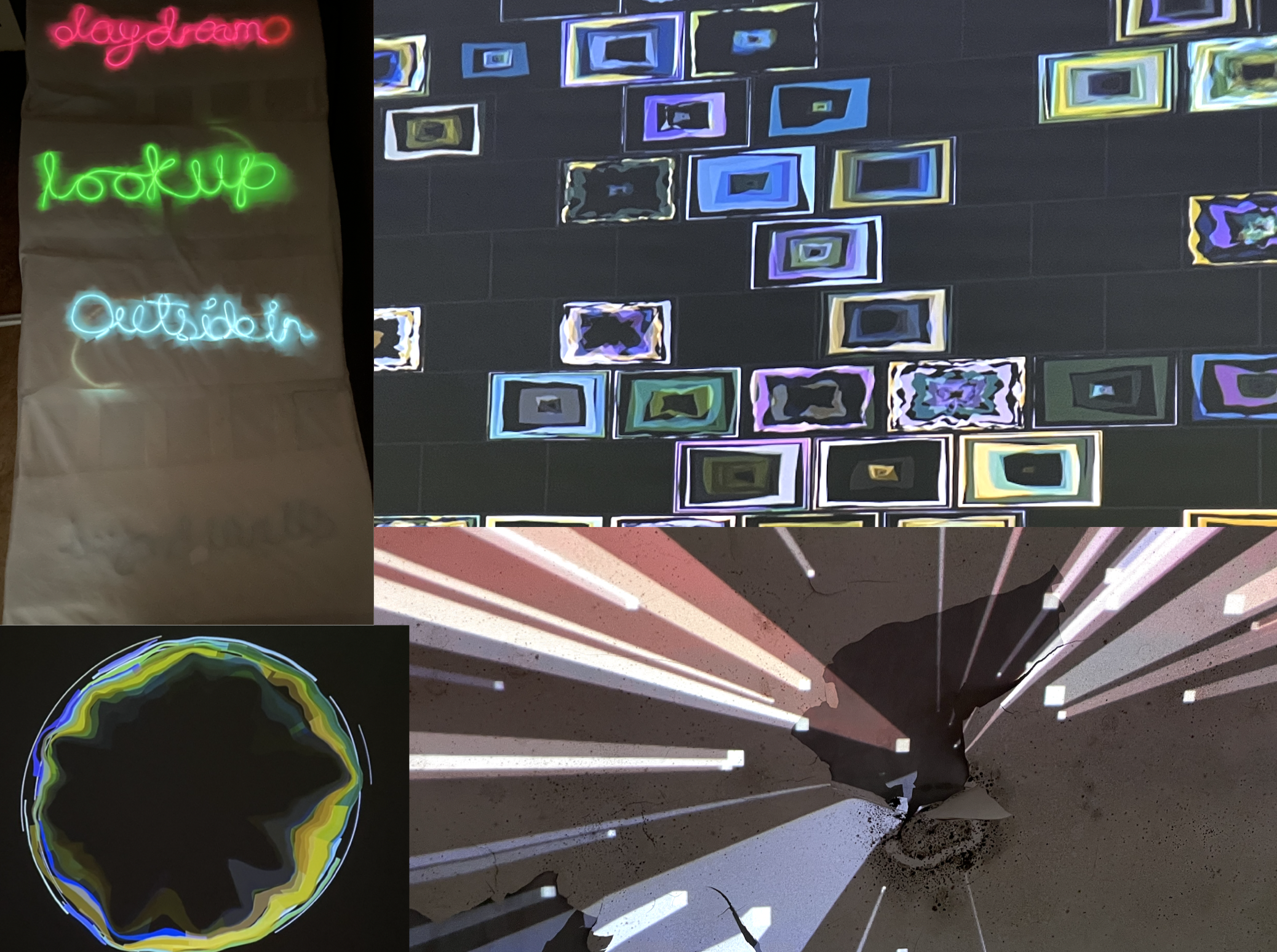

Outside In at Shepton Mallet Prison for Somerset Art Weeks 2022

About...

Outside In is a group exhibition hosted in, and responding to, the cells in C-Wing at Shepton mallet Prison. The show was curated by Luminara Florescu for Somerset Art Weeks in 2022. I used a compination of projected original animations, created with p5.js, EL wire sculpure, and a soundscape of found sounds to create an installation that imagined a world beyond the walls.

Prototype data explorer for Student experience data

About...

A prototype data explorer for Bath Spa Universities NET project, designed to allow open day visitors to explore student experience information and data in aplayful way.

Mapping Imagination: Visualising a Creative and Cultural Ecosystem

About...

Metamorphosis – a group mixed media art exhibition in Frome by Emerge studio

About...

Residents of Emerge Studio in Bath, organised a group mixed media art exhibition in Gallery at the Station, in Frome, Somerset in July 2022. The show included a work I developed in collaboration with Coral Manton, using a simple algorithm and generative layouts to construct plausible advert-like statements and ‘truths’. This is part of an on-going set of developments around algorithms and AI in our lives.

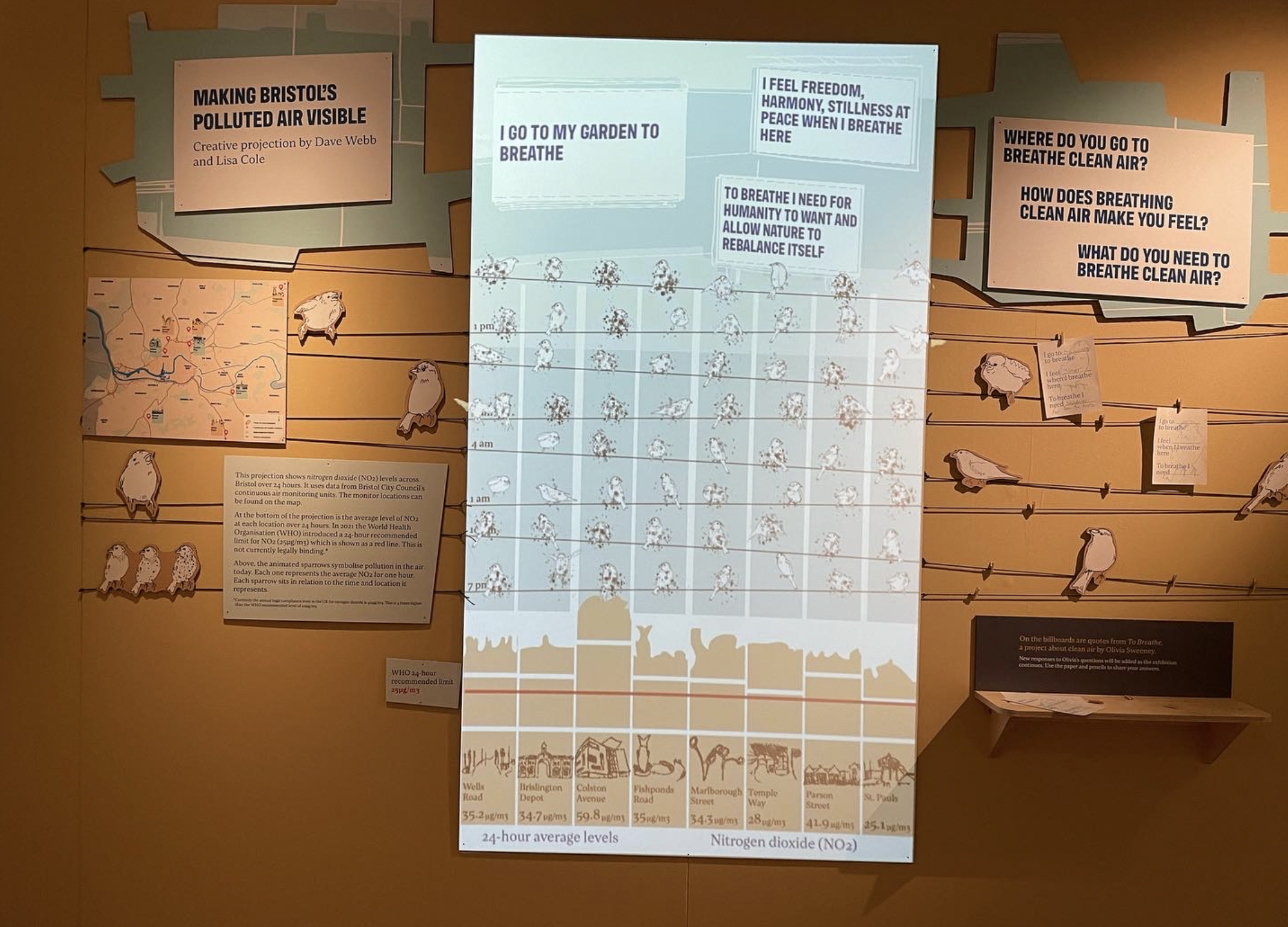

MShed – Think Global Act Bristol, Air quality data visualisation

About...

Data visualisation using animated, ‘sooty’ sparrow illustrations to represent the air quality around Bristol, suing data from Bristol City Councils public sensors. The piece was concieved and designed with artist Lisa Cole. The visulaisation incorporated designs by the museum staff, illustrations by Lisa, and is made using P5.js with a combination of animated stills and generative effects. The Thing Global, Act Bristol exhibition is on at MShed, Bristol, UK until October 2022.

Lost Horizon VR hybrid venue and AI Hall of mirrors at Glastonbury 2022

About...

‘Exquisite Obsolescence’ installation at Fringe Arts Bath, WordPlay Exhibition, June 2022

About...

The machines do not understand the same signals or technologies. There is no common platform or interface. They are not synchronised. They are side by side, connected only by the discussion on media. The two parts of the installation have been developed in isolation, with the two artists occasionally conferring on practicalities. The two elements will meet for the first time at FAB to talk across the decades.

In the Meanwhile 2: creative technology support for three artists

About...

As part of In the Meanwhile 2: a winter light trail of illuminated art works in empty Bath shop fronts in early 2022, I brought a variety of creative technology skills to works by three artists. For Kate McGregor and Juliet Duckworth I embedded programmed LED’s (with Arduino’s) to create effects sympathetic to the art works. For Julie Stark, I created and projected a Kaleidoscope of her plant cell images using p5.js.

In the Meanwhile (with Little Lost Robot)

About...

‘Higher’ with the Studio Recovery Fund

About...

Inch by Inch: Beyond the Line of Sight

About...

Lockdown Table

About...

A short film exploring the role of a table as a ‘place’ at the centre of my family’s lockdown life. Created for and submitted as part of the Pause:Small Stories exhibition.

Be More Circular – Showcase talk

About...

At the SWCTN data showcase event, we got to expand on our vision for guiding citizens towards more circualar, sustainable choices through technology, storytelling and data.

Be More Circular

About...

Be More Circular was a prototype funded by the SWCTN data prototype round. We set out to find engaging ways to make people aware of the impact of their purchases and guide them towards more circular sustainable choices using storytelling and interactive web experiences. The prototype can be found here.

The Studio Breathes

About...

The Studio Breathes gives Studio residents a mobile web app to capture their breathing rhythm, by visualising and tracing on the screen, in an elliptical path. Tracing up as the inhale, down as they exhale. The very act of thinking of your breathing requires you to become conscious of an otherwise autonomic process. This will likely result in a change to breathing, in many they will breath more slowly and deeply.

Participants are invited to reflect on their role in the studio, whether physically present, or working remotely. Reflective prompts are provided by Ximena, who has used such prompts to encourage participants to undertake immersive activities in her own work.

Finally, after submitting their breathing, and thus becoming present, they can observe a visualisation of their own breathing, and the collective breathing rhythms of all currently present breathers.

More Info at http://the.studiobreathes.org/about

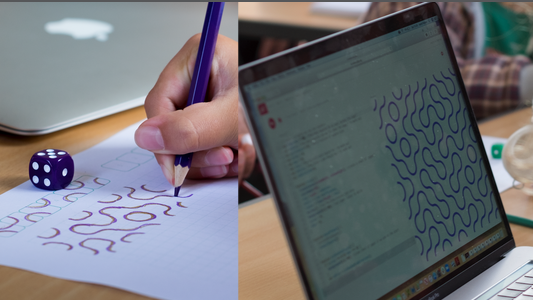

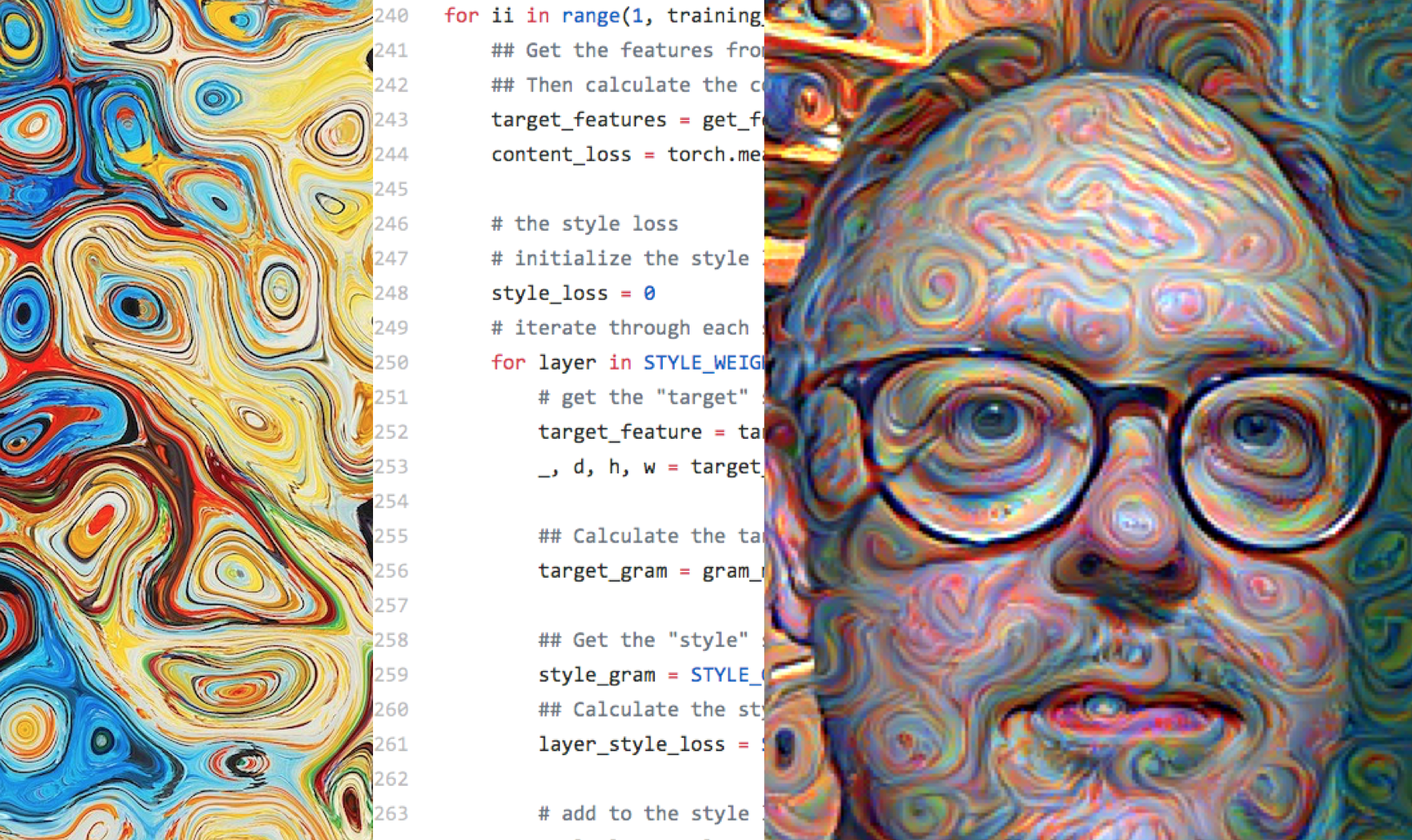

AI for Nervous Humans

About...

AI for Nervous Humans is a workshop for everyone taking a critical look at AI, and then a playful look at how AI can be used easily in creative or practical projects. The emphasis is on making AI accessible while being playful and critical. Development for this workshop was funded by SWCTN.

Control: A Digital Storytelling Experiment

About...

Seb Shrugged

About...

My first ever Unity projectis a 2D game built using a good deal of frame animated pixel art. A complete departure from my typical generative art in p5.js and processing. It gave me freedom to explore a more narrative style of digital creativity.

A project for my MSc Creative COmputing at Bath Spa University.

Four Sketches

About...

A collection of four recent sketches built in p5.js along typical themes for me – natural processes and phenomena, echoes in code.

Revolution

About...

Commonwealth

About...

As part of 44AD Gallery’s exhibition for The Art of Communication in the Commonwealth, Discourse and Influence is a p5.js based sketch using flocking style behaviour to show the shifting influence of two opposing voices in a landscape of followers.

Otherwhere, Elsewhen

About...

In a time ruled by COVID19 and video calls, we find that Here and Now are less definite than they used to be. An exploration of time and place with your webcam, powered by p5.js. Created for and to be exhibited at Fringe Arts Bath (FAB) 2020.

OpenFrames – Playground

About...

Remented

About...

Equals Thought?

About...

while(me.sub(data)>0){}

About...

FrunkelWorm

About...

BeHere

About...

See Pilgrims

About...

Playful Sketches Sampler

Click a sketch to launch its Codepen

Community

Involvement and leadership in local creative technology and art groups and activities

Algorithmic Art Workshops for beginners

About...

The Viennese Salon

About...

The Viennese Salon @theviennesesalon is an artists meetup created by Austrian artist Caroline Vitzthum @vitzthum_caroline, as a friendly cosy space for artists, performers, and makers to share ideas, seek critical dialogue in a safe and open environment. I have been privileged to be invited as a member and a speaker.

Control Shift Network

About...

Creative Coding in Bath

About...

Processing Community Day / You Make the Rules!

About...

- A writeup of the day can be found here.

- Event site

- First You Make the Rules Event

Bath Machine Learning

About...

Early Projects

A selection of very early, pre-digital art projects made over the years

Mobile

About...

c1994

Sculpture

About...